| Author |

|

C0nw0nk

Joined: 07 Oct 2013

Posts: 241

Location: United Kingdom, London

|

Posted: Mon 20 Jan '14 21:46 Post subject: Posted: Mon 20 Jan '14 21:46 Post subject: |

|

|

Sure thing here you go.

http://imageshack.com/a/img22/4060/u2qg.png

Edit : image was a bit big so i just put a link.

Also at the moment its not under heavy load but i do use wincache (every other caching mechanizm i tried caused problems).

When traffic increase in a few hours when everyone gets home i will take another snapshot.

Last edited by C0nw0nk on Mon 20 Jan '14 21:51; edited 1 time in total |

|

| Back to top |

|

jimski

Joined: 18 Jan 2014

Posts: 204

Location: USSA

|

Posted: Mon 20 Jan '14 21:51 Post subject: Posted: Mon 20 Jan '14 21:51 Post subject: |

|

|

Thanks a lot C0nw0nk,

I just tried the FcgidInitialEnv PHP_FCGI_CHILDREN 0

and it helped a lot. I'm still crashing when running phpinfo.php as a test file but at least now it crashes after serving 120,000 requests with concurrency of 1000.

I still can't believe that win 2003 32bit running fcgid is so much faster than win server 2012

Last edited by jimski on Mon 20 Jan '14 21:54; edited 1 time in total |

|

| Back to top |

|

C0nw0nk

Joined: 07 Oct 2013

Posts: 241

Location: United Kingdom, London

|

Posted: Mon 20 Jan '14 21:52 Post subject: Posted: Mon 20 Jan '14 21:52 Post subject: |

|

|

| jimski wrote: | Thanks a lot C0nw0nk,

I just tried the FcgidInitialEnv PHP_FCGI_CHILDREN 0

and it helped a lot. I'm still crashing when running phpinfo.php as a test file but at least now it crashes after serving 120,000 requests. |

What is your php.ini config ? I can send you mine if that helps ? I do use wincache and no other caching mechanizm because they all caused me problems. Wincache is the only amazing one that does not.

Also my server is windows 2008. I do have a windows 2012 server but it is for mysql quiries  |

|

| Back to top |

|

jimski

Joined: 18 Jan 2014

Posts: 204

Location: USSA

|

Posted: Mon 20 Jan '14 21:59 Post subject: Posted: Mon 20 Jan '14 21:59 Post subject: |

|

|

I don't have any caching so far.

my php.ini is default.

If you could email me your php.ini it would be cool.

Thank you

Last edited by jimski on Mon 20 Jan '14 22:11; edited 1 time in total |

|

| Back to top |

|

C0nw0nk

Joined: 07 Oct 2013

Posts: 241

Location: United Kingdom, London

|

Posted: Mon 20 Jan '14 22:07 Post subject: Posted: Mon 20 Jan '14 22:07 Post subject: |

|

|

| Just emailed you it, You will find if you pick up a caching mechanizm especialy wincache the performance boost you will recieve is amazing. |

|

| Back to top |

|

jimski

Joined: 18 Jan 2014

Posts: 204

Location: USSA

|

Posted: Mon 20 Jan '14 22:11 Post subject: Posted: Mon 20 Jan '14 22:11 Post subject: |

|

|

Thank you again. I received your email.

I will do some more hacking. |

|

| Back to top |

|

C0nw0nk

Joined: 07 Oct 2013

Posts: 241

Location: United Kingdom, London

|

Posted: Mon 20 Jan '14 22:16 Post subject: Posted: Mon 20 Jan '14 22:16 Post subject: |

|

|

| jimski wrote: | | Thank you again. I received your email. |

My pleasure i have no idea if the connections are concurrent i do not use apache as a frontend i use nginx as a frontend. And apache delivers the dynamic content from the backend.

But like on the mod_fcgi web page it says dont use APC for good reason.

| Quote: | | The popular APC opcode cache for PHP cannot share a cache between PHP FastCGI processes unless PHP manages the child processes. Thus, the effectiveness of the cache is limited with mod_fcgid; concurrent PHP requests will use different opcode caches. |

I used zend opcache and it did ok but it did not have a file cache according to wikiepedia.

http://en.wikipedia.org/wiki/List_of_PHP_accelerators#Comparison_of_features

So now i use only wincache.

Windows cache i will recommend to everyone though.

I sent you another email with the wincache statistics (realtime) |

|

| Back to top |

|

jimski

Joined: 18 Jan 2014

Posts: 204

Location: USSA

|

Posted: Wed 22 Jan '14 20:08 Post subject: Posted: Wed 22 Jan '14 20:08 Post subject: |

|

|

FINAL CONCLUSION:

After everything I have read and tried I don't believe that 10000 concurrent connections can be done on Windows while running Apache and fcgid. I don't belive that IIS can do that either with PHP.

Some people have accomplished c10k with ASP.NET but I'm yet to see one article that claims c10k on IIS with PHP.

If anybody has a different experience than please share it. |

|

| Back to top |

|

C0nw0nk

Joined: 07 Oct 2013

Posts: 241

Location: United Kingdom, London

|

Posted: Wed 22 Jan '14 22:13 Post subject: Posted: Wed 22 Jan '14 22:13 Post subject: |

|

|

| jimski wrote: | FINAL CONCLUSION:

If anybody has a different experience than please share it. |

Here is one way.

Instal Nginx (download from www.nginx.org)

You will need to instal and run 10 apache instals on different ports.

example Apache config :

Add +1 to every port on the apache configs so.

apache server number 1 :

apache server number 2 :

apache server number 3 :

apache server number 4 :

apache server number 5 :

apache server number 6 :

apache server number 7 :

apache server number 8 :

apache server number 9 :

apache server number 10 :

When all your apache servers are running with the same configs but different ports. Setup your nginx server to run on port 80.

Nginx will accept all traffic for apache and will route traffic via upstreams to the servers with the following config.

| Code: | upstream web_rack {

server 127.0.0.1:8001;

server 127.0.0.1:8002;

server 127.0.0.1:8003;

server 127.0.0.1:8004;

server 127.0.0.1:8005;

server 127.0.0.1:8006;

server 127.0.0.1:8007;

server 127.0.0.1:8008;

server 127.0.0.1:8009;

server 127.0.0.1:8010;

}

server {

listen 80;

server_name www.domain.com;

location / {

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $remote_addr;

proxy_set_header Host $host;

proxy_pass http://web_rack;

}

}

|

Now you can accept 10k concurrent connections.

Also to tune nginx concurrent connections if you want 10k this should do it.

| Code: | events {

worker_connections 100000;

multi_accept on;

}

worker_rlimit_nofile 2000000; |

Call that overkill at 100,000 concurrent nginx connections but i like overkill! |

|

| Back to top |

|

jimski

Joined: 18 Jan 2014

Posts: 204

Location: USSA

|

Posted: Wed 22 Jan '14 22:29 Post subject: Posted: Wed 22 Jan '14 22:29 Post subject: |

|

|

Interesting Idea.

My understanding is that Nginx on windows is limited and can't support more than 1024 simultaneous connections.

http://nginx.org/en/docs/windows.html#known_issues

And even 10 apache servers running with 100 php-cgi.exe processes each would account for only 1000 concurrent connections at best.

But I like your solution anyway

Last edited by jimski on Wed 22 Jan '14 22:31; edited 1 time in total |

|

| Back to top |

|

C0nw0nk

Joined: 07 Oct 2013

Posts: 241

Location: United Kingdom, London

|

Posted: Wed 22 Jan '14 22:31 Post subject: Posted: Wed 22 Jan '14 22:31 Post subject: |

|

|

Read the bottom of my post you can edit nginx config to accept more concurrent connections.

Atleast i am pretty sure nginx connections are concurrent.

Last edited by C0nw0nk on Wed 22 Jan '14 22:35; edited 1 time in total |

|

| Back to top |

|

jimski

Joined: 18 Jan 2014

Posts: 204

Location: USSA

|

Posted: Wed 22 Jan '14 22:35 Post subject: Posted: Wed 22 Jan '14 22:35 Post subject: |

|

|

I have red your post, but this is what nginx page says:

Known issues

Although several workers can be started, only one of them actually does any work.

A worker can handle no more than 1024 simultaneous connections.

The cache and other modules which require shared memory support do not work on Windows Vista and later versions due to address space layout randomization being enabled in these Windows versions.

Last edited by jimski on Wed 22 Jan '14 22:36; edited 1 time in total |

|

| Back to top |

|

C0nw0nk

Joined: 07 Oct 2013

Posts: 241

Location: United Kingdom, London

|

Posted: Wed 22 Jan '14 22:36 Post subject: Posted: Wed 22 Jan '14 22:36 Post subject: |

|

|

Good thing you run windows server 2003 then.

Also just do the same concept but with nginx to make nginx run 10k concurrent connections then point each nginx to a apache server.

It will be a hell of a puzzle box config but it will achieve it.

It is a complex setup for something that should be fixed but im sure nginx will get there soon.

http://nginx.org/en/docs/windows.html

http://wiki.nginx.org/Pitfalls

Also i dont know if they have updated that page or what but according to other sites people on windows nginx do go beyond the 1k concurrent connection limit.

https://www.ruby-forum.com/topic/4416791#1120688 |

|

| Back to top |

|

jimski

Joined: 18 Jan 2014

Posts: 204

Location: USSA

|

Posted: Thu 23 Jan '14 5:37 Post subject: Posted: Thu 23 Jan '14 5:37 Post subject: |

|

|

I think you are right. Looks like there is an experimental version of Nginx that may support more connections but the latest official version doesn't seem to have this ability.

http://nginx.org/en/download.html |

|

| Back to top |

|

C0nw0nk

Joined: 07 Oct 2013

Posts: 241

Location: United Kingdom, London

|

Posted: Thu 23 Jan '14 21:42 Post subject: Posted: Thu 23 Jan '14 21:42 Post subject: |

|

|

| jimski wrote: | I think you are right. Looks like there is an experimental version of Nginx that may support more connections but the latest official version doesn't seem to have this ability.

http://nginx.org/en/download.html |

Yes well on my servers i run the Mainline version.

Aswell as i use cloudflare and cloudflare say 3000+ concurrent connections per ip address of there servers.

So thats more than 10k from cloudflare to my sites. |

|

| Back to top |

|

C0nw0nk

Joined: 07 Oct 2013

Posts: 241

Location: United Kingdom, London

|

Posted: Fri 24 Jan '14 18:12 Post subject: Posted: Fri 24 Jan '14 18:12 Post subject: |

|

|

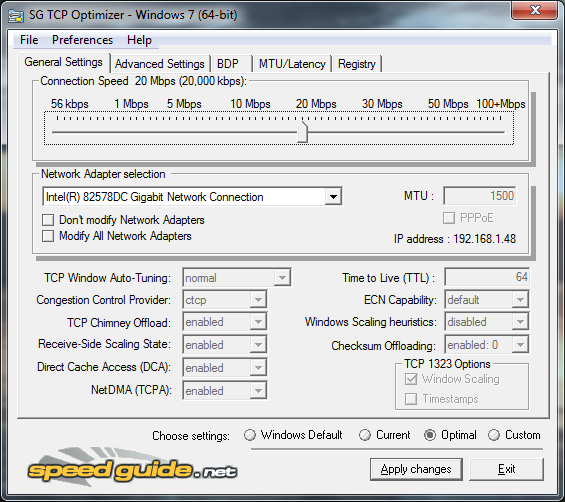

Also i recommend you configure your registry TCP stack to the optimal settings with this tool.

http://www.speedguide.net/downloads.php

SG TCP Optimizer

You will need to reboot your machine for the registry settings to take effect. |

|

| Back to top |

|

jimski

Joined: 18 Jan 2014

Posts: 204

Location: USSA

|

Posted: Fri 24 Jan '14 20:33 Post subject: Posted: Fri 24 Jan '14 20:33 Post subject: |

|

|

Would you be so kind and could you email me your Nginx configuration file.

I will follow your suggestion of placing Nginx at front of Apache.

BTW, this is a nice tool. I was tweaking the TCP stack manually to remove some of the limitations but this tool is very cool. |

|

| Back to top |

|

C0nw0nk

Joined: 07 Oct 2013

Posts: 241

Location: United Kingdom, London

|

Posted: Sat 25 Jan '14 11:28 Post subject: Posted: Sat 25 Jan '14 11:28 Post subject: |

|

|

Also with nginx you will need a program or a batch file or something to make it run at server boot.

My nginx configuration is very complex so i dont think it would be for you but you are welcome to attempt to use it.

Everyones configuration is for their own needs my configuration is for delivering static content and passing all dynamic content to apache aswell as caching all static content and maximizing performance.(I dont think my config will work for the sites on your server)

But to save me digging through my emails i will paste my config here.

| Code: |

#user nobody;

worker_processes 1;

#error_log logs/error.log;

#error_log logs/error.log notice;

#error_log logs/error.log info;

error_log logs/error.log crit;

#pid logs/nginx.pid;

events {

worker_connections 1900000;

multi_accept on;

}

worker_rlimit_nofile 2000000;

http {

include mime.types;

default_type application/octet-stream;

#log_format main '$remote_addr - $remote_user [$time_local] "$request" '

# '$status $body_bytes_sent "$http_referer" '

# '"$http_user_agent" "$http_x_forwarded_for"';

#access_log logs/access.log main;

access_log off;

sendfile on;

tcp_nopush on;

tcp_nodelay on;

#keepalive_timeout 0;

#keepalive_timeout 10;

keepalive_requests 100000;

client_max_body_size 1000m;

server_tokens off;

etag off;

#gzip on;

#gzip_static on;

#gzip_comp_level 3;

#gzip_disable "MSIE [1-6]\.";

#gzip_http_version 1.1;

#gzip_vary on;

#gzip_proxied any;

#gzip_types text/plain text/css text/xml text/javascript text/x-component text/cache-manifest application/json application/javascript application/x-javascript application/xml application/rss+xml application/xml+rss application/xhtml+xml application/atom+xml application/wlwmanifest+xml application/x-font-ttf image/svg+xml image/x-icon font/opentype app/vnd.ms-fontobject;

#gzip_min_length 1000;

open_file_cache max=900000 inactive=10m;

open_file_cache_valid 20m;

open_file_cache_min_uses 1;

open_file_cache_errors on;

#proxy_intercept_errors on;

#server_name_in_redirect off;

#reset_timedout_connection on;

#upstream web_rack {

# server 127.0.0.1:80;

# server 127.0.0.1:8000;

#}

server {

listen 80;

server_name domain.com www.domain.com;

#charset koi8-r;

#access_log logs/host.access.log main;

root c:/server/websites/ps/public_www;

index index.php index.html index.htm default.html default.htm;

#index index.php;

location / {

root c:/server/websites/ps/public_www;

#location ~* \.(php|html|htm)$ {

#try_files $uri $uri/ /index.php;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $remote_addr;

proxy_set_header Host $host;

#proxy_pass http://web_rack;

#proxy_pass http://127.0.0.1:80;

proxy_pass http://127.0.0.1:8000;

expires 10s;

max_ranges 0;

if ( $args ~ 'option=com_hwdmediashare&task=addmedia.upload([a-zA-Z0-9-_=&])' ) {

proxy_pass http://127.0.0.1:8000;

}

}

location ~ \.flv$ {

flv;

limit_rate 400k;

expires max;

}

location ~ \.mp4$ {

limit_rate 400k;

expires max;

}

#error_page 404 /404.html;

#error_page 404 http://www.ptp22.com/seo.php?username=c0nw0nk&format=ptp;

# redirect server error pages to the static page /50x.html

#

#error_page 500 502 503 504 /50x.html;

#location = /50x.html {

# root html;

#}

# proxy the PHP scripts to Apache listening on 127.0.0.1:80

#

#location ~ \.php$ {

location ~* \.(ico|png|jpg|jpeg|gif|flv|mp4|avi|m4v|mov|divx|webm|ogg|mp3|mpeg|mpg|swf|css|js|txt)$ {

root c:/server/websites/ps/public_www;

expires max;

}

# pass the PHP scripts to FastCGI server listening on 127.0.0.1:9000

#

#location ~ \.php$ {

# root html;

# fastcgi_pass 127.0.0.1:9000;

# fastcgi_index index.php;

# fastcgi_param SCRIPT_FILENAME /scripts$fastcgi_script_name;

# include fastcgi_params;

#}

# deny access to .htaccess files, if Apache's document root

# concurs with nginx's one

#

location ~ /\.ht {

#deny all;

return 404;

}

location ~ ^/(xampp|security|phpmyadmin|licenses|webalizer|server-status|server-info|cpanel|configuration.php) {

#deny all;

return 404;

}

#location ~ ^/(register) {

#root c:/server/websites/ps/public_www;

#proxy_set_header X-Real-IP $remote_addr;

#proxy_set_header X-Forwarded-For $remote_addr;

#proxy_set_header Host $host;

#proxy_pass http://127.0.0.1:80;

#expires 10s;

#max_ranges 0;

#}

#location ~ ^/(forums) {

#root c:/server/websites/ps/public_www;

#proxy_set_header X-Real-IP $remote_addr;

#proxy_set_header X-Forwarded-For $remote_addr;

#proxy_set_header Host $host;

#proxy_pass http://127.0.0.1:80;

#expires 10s;

#max_ranges 0;

#}

#location ~ ^/(administrator) {

#root c:/server/websites/ps/public_www;

#proxy_set_header X-Real-IP $remote_addr;

#proxy_set_header X-Forwarded-For $remote_addr;

#proxy_set_header Host $host;

#proxy_pass http://127.0.0.1:8000;

#expires 10s;

#max_ranges 0;

#}

}

}

|

As you can see from my config i use video streams and i put limits on download rates of flv mp4 files aswell as a expires max what means the static content will not expire for 10 years when stored on the visitors browser.

Even with dynamic web pages i tell it to cache them for 10 seconds.

I would also recommend putting your domain name behind cloudflare if you have not already done so.

Also with my config on my server nginx never uses more than 500-700mb of ram and cpu is always at 0%

It takes the stress of apache delivering static content so now all my apache handles is dynamic content only php and html everything else goes to nginx.(Faster) |

|

| Back to top |

|

jimski

Joined: 18 Jan 2014

Posts: 204

Location: USSA

|

Posted: Sat 25 Jan '14 22:32 Post subject: Posted: Sat 25 Jan '14 22:32 Post subject: |

|

|

| Thank you, that's very kind. |

|

| Back to top |

|

C0nw0nk

Joined: 07 Oct 2013

Posts: 241

Location: United Kingdom, London

|

Posted: Sun 26 Jan '14 14:55 Post subject: Posted: Sun 26 Jan '14 14:55 Post subject: |

|

|

| jimski wrote: | | Thank you, that's very kind. |

Also to block people from directly accessing apache from my servers ip i made this virtual host to intercept anyone connecting to the servers ip to block them.

If you use this it has to be the first virtual host in the config file before all the others.

| Code: |

<VirtualHost *:*>

ServerName default

#Redirect 404 /

<Directory />

Order allow,deny

Deny from all

ErrorDocument 403 http://www.domain.com/error.html

</Directory>

</VirtualHost>

|

Also in my nginx rule above i imply the following rules for additional security.

| Code: |

location ~ /\.ht {

#deny all;

return 404;

}

location ~ ^/(xampp|security|phpmyadmin|licenses|webalizer|server-status|server-info|cpanel|configuration.php) {

#deny all;

return 404;

}

|

nginx blocks people from direct ip access if you try to connect to nginx from the servers ip it blocks you, you can only connect through the domains but i like to do it on apache too. |

|

| Back to top |

|